Documentation Index

Fetch the complete documentation index at: https://docs.tinfoil.sh/llms.txt

Use this file to discover all available pages before exploring further.

Overview

This page explains the basics of how Tinfoil provides verifiable privacy on each connection. Tinfoil uses confidential computing enabled by secure hardware enclaves to create a verifiably private runtime environment in the cloud. The hardware measures and attests the code running in the enclave and we connect these measurements to open source code using transparency logs to ensure end-to-end supply chain security. To make it easier to understand the different components involved, we must first cover the three main parts that make up the Tinfoil system. We have:- a trusted client that runs one of the Tinfoil SDKs and makes requests,

- an untrusted host machine operated by Tinfoil, and

- a secure hardware enclave that runs a server in isolation from the host.

- The enclave server is running code that is auditable and immutable

- The enclave server computation is isolated from the host operator

- The client request data is end-to-end encrypted to the enclave (only the enclave can decrypt it)

Example: Private Inference

To provide a concrete example, consider our Private Inference service. Simplifying slightly, we have an enclave with an AI model loaded inside of it. This enclave is running an open-source inference server (we use vLLM) that serves the model and exposes the /v1/chat/completions API endpoint. A client sends encrypted requests to this endpoint. The enclave decrypts these requests (only the enclave has the decryption key) and forwards them to the inference server running inside. The response is then encrypted and sent back to the client.The problem of code transparency and confidentiality

How can the client be convinced that the enclave is running vLLM as the inference server and not some other (potentially evil) code? Additionally, how can the client verify the end-to-end encryption is enforced to the enclave and isn’t man-in-the-middled by the host? You can easily imagine a scenario where the host pretends to be the enclave and decrypts the clients request, violating confidentiality. We answer these questions in two parts.Part I: Verifying code transparency with remote attestation

The first thing the client needs to be able to do is verify that the enclave is configured correctly and running the expected code. This is achieved with remote attestation. The hardware manufacturer provides a root of trust which is used to prove what’s running its own hardware using a signing key embedded at manufacture time. In a nutshell, the CPU comes with a secret key fused into it by the manufacturer (e.g., Intel or AMD). This key is used to sign the initial configuration and state of the CPU and memory at boot time. The host cannot access this signing key and extraction of the key is assumed impossible. At boot time, the hardware measures the initial state, generating a signed attestation report of the exact launch configuration, such as the firmware, kernel, security parameters, and the application binary running in the enclave. The attestation report can be seen as a cryptographic fingerprint of this entire launch configuration. If the same configuration is loaded twice, the fingerprints match. However, if any component differs (e.g., a firmware change, a modified kernel, or a different binary) then the fingerprints won’t match. This gets us part of the way towards full code transparency. The missing link is connecting the fingerprint to human-readable code that can be inspected for correctness and audited for security.Linking fingerprints to code

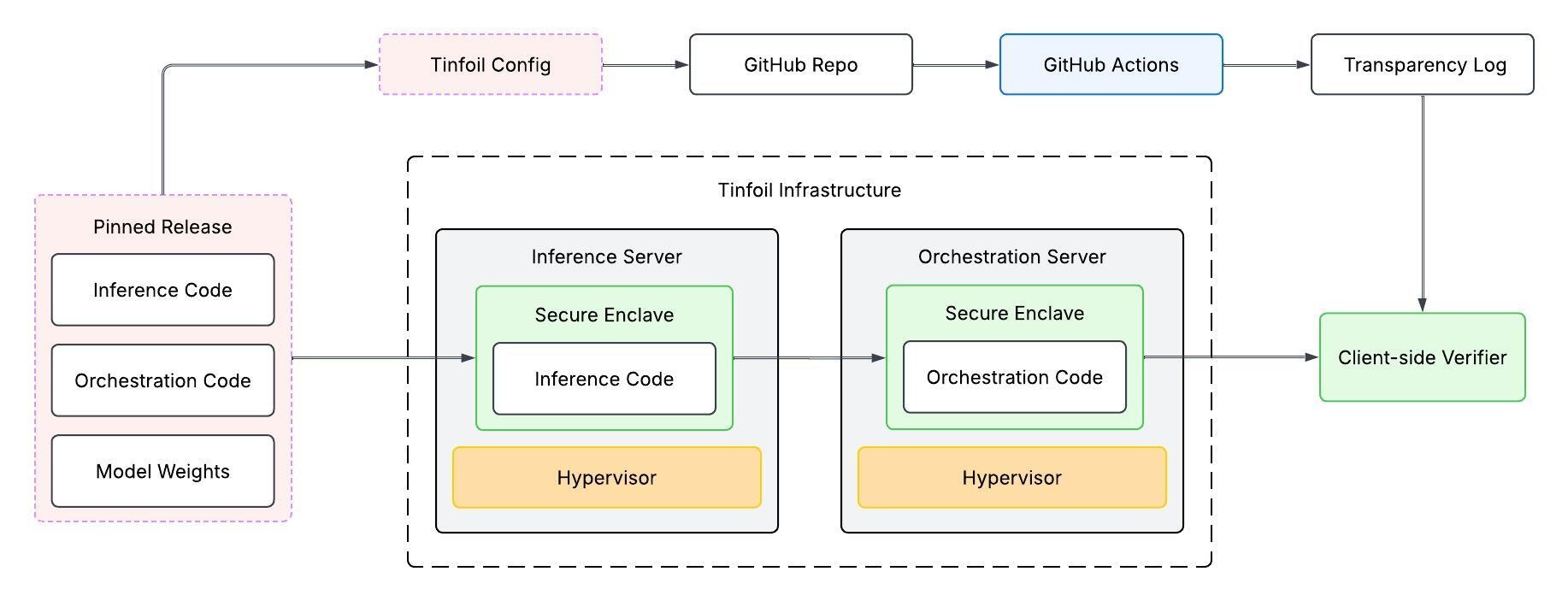

All the code running inside the enclave is published to our GitHub and made open source. In theory, you could rebuild the same binary, go through the attestation process yourself, and then see what fingerprint comes out the other end. If the fingerprint you derive matches the one obtained through remote attestation, then you can be convinced that the code running in the enclave was built from the open-source code published on our GitHub repo. However, this runs into some complications. First of all, ensuring reproducible builds is a challenging problem and generally compilers can have some non-determinism resulting in the same code compiling to different binaries. Recall that if the binaries do not match, then the fingerprints won’t either, making this verification process fail even though we would have wanted it to pass. Second, relying on reproducible builds creates hurdles in verifying the full supply chain efficiently, since you would need to download the code, build it, and measure everything on the same hardware configuration in order compare the fingerprints.Leveraging transparency logs

Instead of relying on reproducible builds, we designed a simpler approach that provides the same supply chain transparency guarantees while enabling more efficient verification and auditability. The idea is to have GitHub build the binary for us using the same host and enclave configuration that we plan on running in production. The GitHub build process then publishes the resulting attestation measurements onto an immutable transparency log (we use Sigstore, a transparency log managed by the Linux Foundation). This transparency log independently attests that the binary was compiled by GitHub itself and produced the “ground truth” fingerprint. This makes it so that anyone can easily compare the ground truth fingerprint to the one received from the Tinfoil enclave, resulting in full supply chain transparency.Part II: Providing end-to-end encryption

The second problem is ensuring that the connection from the client is encrypted directly to the enclave. How can the client verify that the public key it is encrypting all the requests with was generated by the enclave and not the host? This requires tying the public key to the signed attestation report, proving it was generated by the enclave itself. At boot time, the enclave generates an encryption key pair, consisting of a public key and a secret key. The secret key is stored in the enclaves encrypted memory while the public key is made part of the attestation report. When the client connects to the enclave, all data sent over that connection is encrypted using the enclave’s attested public key. We additionally embed the full attestation report into the SAN field of the certificate issued to the enclave. We do this to bind the attestation report to a*.tinfoil.sh domain and prevent any third party enclave from cosplaying

as a Tinfoil controlled enclave.

How our SDKs automatically verify everything

Our SDKs are all open-source and run all verification logic client side. We do not use any proprietary or third party attestation services to verify attestation reports since doing so results in circular security and defeats the point of doing any verification in the first place. On each connection, our SDKs automatically fetch the expected enclave measurements from GitHub and Sigstore and then proceed to verify the enclave attestation report. The whole client-side verification proceeds by first assembling the necessary bundles, validating the configuration of the enclave, and verifying the cryptographic signatures. First, the SDK obtains the Sigstore bundle associated with the latest enclave code release published on the GitHub repo. Second, it obtains the attestation report generated by the remote enclave. In our SDKs, these values are assembled and provided by Tinfoil to minimize the number of requests made to to GitHub, Sigstore, and the enclave. Proxying and caching this verification material on Tinfoil servers does not harm security because each component is signed independently by Sigstore and the hardware manufacturer. Once all the verification material is assembled, the SDK compares the expected Sigstore-provided measurements to the enclave-attestation measurements, if these don’t match, the SDK throws an error and stops all connections from being established. On the other hand, if the measurements match, then the client creates an encrypted connection to the enclave using the public key from the attestation report.Chaining enclaves

The process described above is essentially what happens when you connect toinference.tinfoil.sh

using one of our SDKs and do inference requests.

However, in our production deployment, we use multiple enclaves

to serve the same model (this allows us to load balance requests) and additionally,

for convenience, we need a model router server.

Without a model router, we’d need to have all models running on the same machine

associated with inference.tinfoil.sh which is not reasonable to do in production.The model router is responsible for proxying requests to the right enclave. The solution is to chain enclaves. The router runs inside its own enclave. The client-side SDKs verify the router enclave and ensure the transparency of the router code. The router server then does the same verification for each enclave it routes to. Similarly, all data from the router to the inference server is encrypted with an attested key, ensuring that the whole pipeline from client to model keeps data encrypted from the point of view of the host. By chaining the attestations, we get end-to-end code transparency and confidentiality, even though the client-side SDKs only need to verify the first enclave in this chain.

Figure 1: Simple view of model router and inference attestation.

In-band vs. out-of-band verification

The verification described above is connection-time (in-band) verification — the client verifies the enclave’s attestation before exchanging any data. This is what our SDKs do automatically on every connection. Tinfoil also supports audit-time (out-of-band) verification, where an auditor verifies an enclave after the fact by inspecting material committed to an append-only transparency log.Audit-time verification through attestation transparency

Audit-time verification relies on embedding attestation evidence into TLS certificates, which are then recorded in public certificate transparency (CT) logs. This serves two purposes: (1) it creates a public audit trail of every enclave boot, and (2) it binds the attestation to a*.tinfoil.sh domain, preventing a

third-party enclave from impersonating a Tinfoil-controlled enclave.

At boot time, each enclave generates a fresh ECDSA key pair for TLS and an HPKE key pair for application-layer encryption (used by EHBP).

It then requests a CPU attestation report that covers both the TLS key fingerprint and the HPKE public key,

cryptographically binding them to the measured enclave state.

The attestation report is serialized into a compact format and embedded in the Subject Alternative Name (SAN) field of a standard x509 TLS certificate.

To obtain this certificate, the enclave registers an ACME identity and

submits a certificate signing request to a public certificate authority,

including the attestation-bearing SAN extension.

The CA issues the certificate, which is automatically recorded in public CT logs.

The resulting certificate ties together the enclave’s TLS and HPKE public keys, its CPU attestation report,

and its *.tinfoil.sh domain into a single publicly logged artifact.

Why the SAN field?

The SAN field is the standard x509 extension that binds a certificate to specific domain names. By encoding the full CPU attestation report into this field alongside the*.tinfoil.sh domain, every TLS certificate issued for a

Tinfoil enclave carries a cryptographic proof of the code that was running

when the certificate was requested.

This has two consequences:

- Tamper detection: All publicly trusted TLS certificates are logged in CT logs. Any attempt to issue a certificate for a Tinfoil domain without valid attestation would be visible in the public record.

-

Auditability: Anyone can inspect CT logs for

*.tinfoil.shcertificates and decode the embedded attestation to verify what code was running.

Backend Infrastructure Deep Dive

Explore the full backend architecture, including the CVM image, Sigstore integration, and the build-to-deployment lifecycle.